Usage¶

The Optimizer implements different optimization techniques, such as a semi-automatic sequential approach, Monte-Carlo simulations, and a global optimization technique using genetic algorithms. Establishing a statistical relationship between predictors and predictands is computationally intensive because it requires numerous assessments over decades.

The calibration of the AM is usually performed in a perfect prognosis framework. Perfect prognosis uses observed or reanalyzed data to calibrate the relationship between predictors and predictands. As a result, perfect prognosis yields relationships that are as close as possible to the natural links between predictors and predictands. However, there are no perfect models, and even reanalysis data may contain biases that cannot be ignored. Thus, the considered predictors should be as robust as possible, i.e., they should have minimal dependency on the model.

A statistical relationship is established with a trial and error approach by processing a forecast for every day of a calibration period. A certain number of days close to the target date are excluded to consider only independent candidate days. Validating the parameters of AMs on an independent validation period is very important to avoid over-parametrization and to ensure that the statistical relationship is valid for another period. In order to account for climate change and the evolution of measuring techniques, it is recommended that a noncontinuous period for validation should be used, distributed over the entire archive (for example, every 5th year). AtmoSwing users can thus specify a list of the years to set apart for the validation that are removed from possible candidate situations. At the end of the optimization, the validation score is processed automatically. The different verification scores available are detailed here.

Requirements¶

The Optimizer needs:

Calibration methods¶

The Optimizer provides different approaches listed below.

Evaluation-only approaches¶

These methods do not seek to improve the parameters of the AM. They allow some assessments using the provided parameters. The different verification scores available are detailed here.

Single assessment: This approach processes the AM as described in the provided parameters file and assesses the defined score.

Evaluation of all scores: Similar as above, but assesses all the implemented scores.

Only predictand values: Does not process a skill score, but processes the AM and save the analog values into a file.

Only analog dates (and criteria): Does not process a skill score, neither assign predictand values to the analog dates. It only saves the analog dates identified by the defined AM into a file.

Based on the classic calibration¶

The classic calibration is detailed here.

Classic calibration: The classic calibration

Classic+ calibration: A variant of the classic calibration with some improvements.

Variables exploration Classic+: Using the classic+ calibration to systematically explore a list of variables / levels / hours.

Global optimization¶

Genetic algorithms: The optimization using genetic algorithms.

Random exploration of the parameters space¶

Monte-Carlo simulations: An exploration of the parameters space using Monte-Carlo simulations. These simulations were found to be limited in terms of ability to find reasonable parameters sets for even moderately complex AMs (1-2 levels of analogy with a few predictors).

Outputs¶

The Optimizer produces different files:

A text file with the resulting best parameters set and the skill score ([…]best_parameters.txt).

A text file with all the assessed parameters set and their corresponding skill score ([…]tested_parameters.txt).

An xml file with the best parameters set (to be used further by AtmoSwing Forecaster/Downscaler; […]best_parameters.xml).

A NetCDF file containing the analog dates (AnalogDates[…].nc) both for the calibration and validation periods.

A NetCDF file containing the analog values (AnalogValues[…].nc) both for the calibration and validation periods.

A NetCDF file containing the skill scores (Scores[…].nc) both for the calibration and validation periods.

Graphical user interface¶

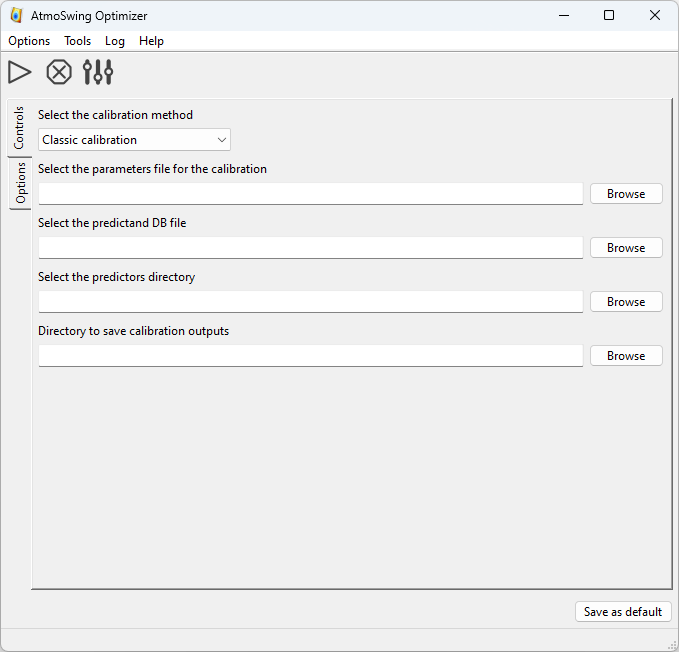

The main interface of the Optimizer is as follows.

The toolbar allows the following actions:

Start the optimization.

Start the optimization. Stop the current calculations.

Stop the current calculations. Define the preferences.

Define the preferences.

What is needed:

Select one of the calibration methods

The directory containing the predictors for the archive period

The directory to save the results

All the options for the selected calibration method (in the Options tab; see below)

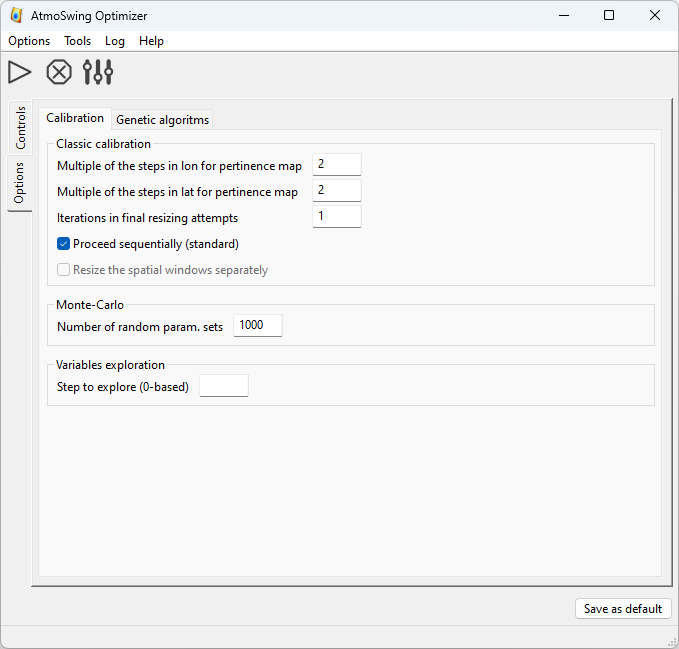

There is one tab to define the options of the classic calibration, the variables exploration, and the Monte-Carlo simulations. The details of the options are given here.

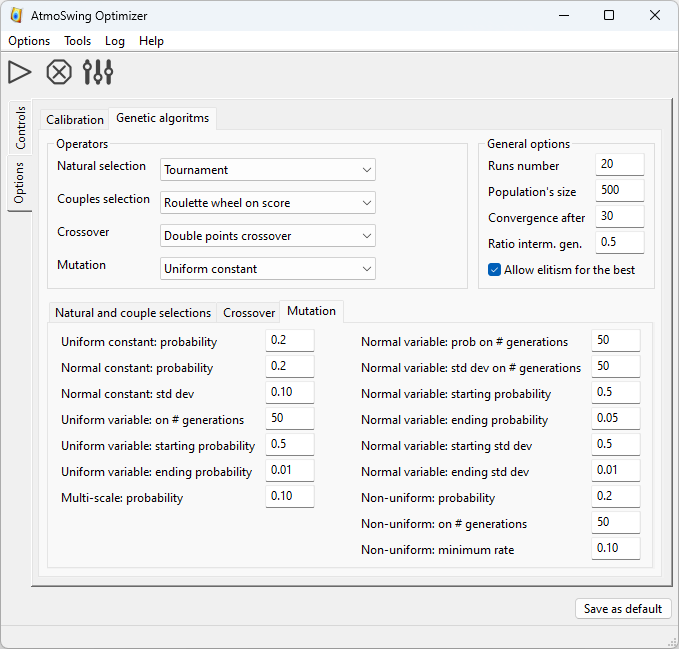

The other tab provides numerous options for genetic algorithms. The details of the options are given on the page of the genetic algorithms.

Command line interface¶

The Optimizer also has a command line interface, which is the prefered way of using it. The options are as follows:

- -h, --help

Displays the help of command line options

- -v, --version

Displays the software version

- -s, --silent

Silent mode

- -l, --local

Work in local directory

- -n, --threads-nb=<n>

Number of threads to use

- -g, --gpus-nb=<n>

Number of gpus to use

- -r, --run-number=<nb>

A given run number

- -f, --file-parameters=<file>

File containing the parameters

- --predictand-db=<file>

The predictand DB

- --station-id=<id>

The predictand station ID

- --dir-predictors=<dir>

The predictors directory

- --skip-valid

Skip the validation calculation

- --no-duplicate-dates

Do not allow to keep several times the same analog dates (e.g. for ensembles)

- --dump-predictor-data

Dump predictor data to binary files to reduce RAM usage

- --load-from-dumped-data

Load dumped predictor data into RAM (faster load)

- --replace-nans

Option to replace NaNs with -9999 (faster processing)

- --skip-nans-check

Do not check for NaNs (faster processing)

- --calibration-method=<method>

Choice of the calibration method:

single: single assessmentclassic: classic calibrationclassicp: classic+ calibrationvarexplocp: variables exploration classic+montecarlo: Monte Carloga: genetic algorithmsevalscores: evaluate all scoresonlyvalues: get only the analog valuesonlydates: get only the analog dates

- --cp-resizing-iteration=<int>

Classic+: resizing iteration

- --cp-lat-step=<step>

Classic+: steps in latitudes for the relevance map

- --cp-lon-step=<step>

Classic+: steps in longitudes for the relevance map

- --cp-proceed-sequentially

Classic+: proceed sequentially

- --ve-step=<step_nb>

Variables exploration: step to process

- --mc-runs-nb=<runs_nb>

Monte Carlo: number of runs

- --ga-xxxxx=<value>

All GAs options are described on the genetic algorithms page

- --log-level=<n>

Set the log level (0: minimum, 1: errors, 2: warnings (default), 3: verbose)